Meeting notes provided by Gemini

- Overview of Segment Anything Model (SAM) and Segmentation: Josh Phillips initiated the presentation by gauging the audience’s experience with computer vision models like YOLO and segmentation. They explained that the SAM family of models handles various media (images, video, 3D, and audio) by identifying and segmenting objects, which is used for downstream applications (00:14:41). SAM models are particularly effective at producing highly detailed segmentation masks, which are more granular than bounding boxes (00:15:43).

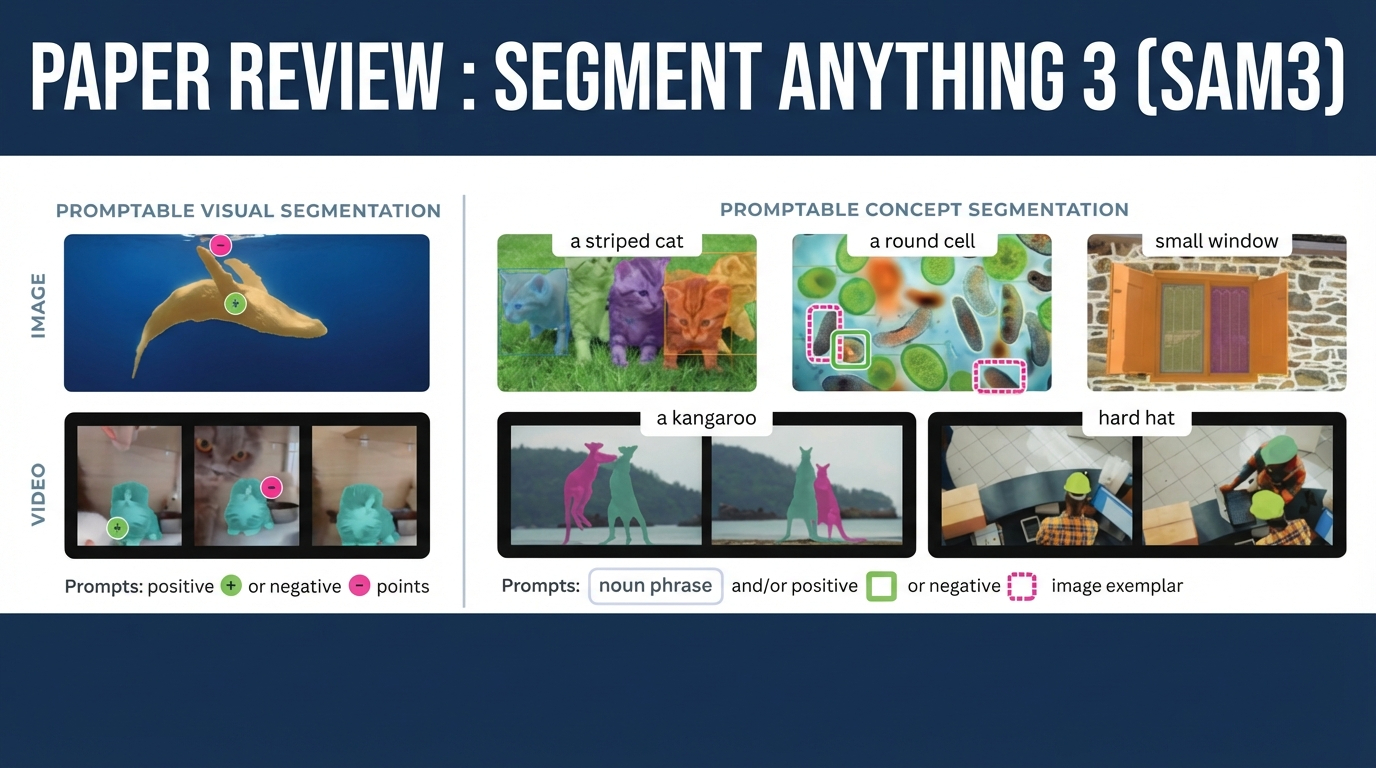

- SAM’s Interactive and Generalizable Capabilities: The SAM models were designed to be highly generalizable and easy to fine-tune, being labeled as “segment anything” (00:15:43). SAM 1 and 2 primarily used interactive point masks, where a user clicks inside an object for segmentation, making automation difficult (00:16:36). SAM 3 introduces the new capability of basic text prompts for segmentation, a robust feature not well-supported by previous models like Dino (00:17:28).

- SAM 3 Demos and Agentic Behavior: Josh Phillips began the demonstration section, noting the official playground site was at capacity but that they would use Hugging Face and code spaces (00:18:23). A demonstration showed SAM 3 masking penguins in a video using a text prompt, highlighting the model’s new ability to assign and maintain unique IDs for objects even when they move off-screen, a capability cited as unique among current models (00:19:20). Another example showed SAM 3 utilizing an agentic loop to iteratively prompt for complex segmentations, such as finding “airplanes with the letter AE on its body” (00:21:18).

- Multimodal Segmentation Extensions: Josh Phillips introduced three additional models from the same family as SAM 3: a 3D model that generates a mesh from point masks (00:22:21), a human-focused 3D model for generating pose meshes and bone structure useful for character rigging (00:27:25), and SAM Audio, which can segment audio based on arbitrary text prompts, such as isolating male vocals (00:23:06). The audio segmentation demonstrated applying effects like reverb and a robot voice exclusively to the segmented voice (00:25:49).

- SAM 3 Model Architecture and Input Constraints: Josh Phillips transitioned to discussing the SAM 3 paper, focusing on the model’s ability to handle text prompts for positive and negative segmentation exemplars (00:29:24). They detailed that SAM 3 is superior to previous models like SAM-OWL due to its fine-grained masking, achieved through training with massive datasets focused on attribute annotation (e.g., “plaid shirt”) rather than just nouns (00:30:29). J. Langley asked about the limit for text prompts, which Josh Phillips estimated is capped at around 32 tokens, emphasizing that the prompt needs to remain simple to avoid breaking the system (00:32:37).

- Detection Transformer and Global Understanding: Josh Phillips explained that the model utilizes a Detection Transformer (DETR), a model from 2020, to enhance the capabilities of the Convolutional Neural Network (CNN) by using a self-attention mechanism. This allows the model to gain a global understanding of features across the entire image, addressing the CNN’s limitation of only focusing on local features (00:37:07).

- The Core Components of the SAM 3 Detector Module: The SAM 3 architecture has three inputs: an image, text, and geometry (like image exemplars). These inputs feed into a multimodal decoder, which performs early fusion of the features, and then into a pixel decoder for segmentation (00:40:44). A key component is the perception encoder, which produces a “presence token”—a boolean indicating if the queried object is present—which aids in splitting the local and global detection tasks (00:41:47) (00:45:56).

- Segmentation Mask and Memory Management: Josh Phillips explained that the detector module integrates the pixel decoder with a detector decoder that uses a “presence token” and queries to determine confidence and output masks, boxes, or scores (00:41:47). The model also employs “masklets,” which are used primarily for video to maintain an object’s identity across frames, feeding into a first-in, first-out memory bank (00:44:46). Josh Phillips added that the model is trained to drop out memory frames when confidence is low to avoid biasing subsequent segmentation (00:53:35).

- Clip Model and the Perception Encoder: The perception encoder, which provides the model’s text backbone, is a Clip-based model (00:45:56) (00:49:27). Josh Phillips explained an interesting finding that the most accurate embeddings for downstream tasks are taken from the middle layers of the network, as the accuracy decreases in the later layers due to the model’s bias towards language output (00:47:08). The perception encoder is trained to predict these middle-layer outputs, a technique that improves its performance (00:48:24).

- LLM Annotation and Model Distillation: Josh Phillips noted that the simplicity of the SAM 3 prompting strategy allowed the use of large language models, like Llama 32, for effective data annotation, enabling a much larger and cleaner data set (00:54:56). They mentioned that SAM 3’s performance surpassed the teacher model it was distilled from, which is uncommon in distillation processes (00:57:54).

- SAM 3 as a Tool for Agentic Systems: The final discussion highlighted SAM 3’s role as a tool within an agentic system. An external agent feeds small text queries to SAM 3 and judges the confidence score to decide whether to continue the iterative process (00:55:58). This agentic loop is necessary because even powerful models like Gemini and Florence 2 are not yet capable of performing the fine-grained segmentation masking that SAM 3 can achieve (00:56:59).

- Real-time Object Tracking Performance and Model Distillation: The speaker discussed the performance of the model for real-time object tracking, noting that tracking 10 objects required two H200s, 28 objects required four H200s, and 64 objects required eight H200s for real-time performance at 30 frames per second. They also noted that while this data reflects the base model without quantization or distillation, the model can be easily distilled and fine-tuned for specific tasks to increase speed, referencing examples like a “turbo” variant (00:58:55).

- SAM 3 Data Set and Training Phases: The training data for SAM 3 was collected in three phases, which differed from prior recipes like the Juan diffusion transformer by primarily using images for 75% of the data and adding video at the end. This inverse training recipe, compared to frame-by-frame methods, is due to the “generated all at once method” used by SAM 3 (01:00:03). Phase one involved human verification, which led to subsequent phases involving human and AI verification, where the AI verifiers eventually surpassed human performance (01:01:31).

- Hard Negatives and Data Set Inclusions: During the training runs, hard negatives were introduced to train the model against false positives by specifying “what is not in the image as what is there”. A major inclusion in the SAM 3 data set, which was not present in SAM 2, was medical imaging data, opening up new applications for medical diagnosis (01:01:31). Aerial data was also added as a subset of the data, potentially under categories like “building and location stuff” (01:02:43).

- SAM 3 Agent Capabilities and Examples: Examples were shown illustrating the SAM 3 agent’s ability to process detailed prompts and perform iterative segmentation, even with smaller models like 7B variants, for fine-tuned tasks. The agent successfully identified subtle elements, such as a lion’s mane to indicate a male animal or the specific component where bees draw nectar (01:03:43). The agent could also identify abstract concepts, such as the part of a picture that is “funny and out of place,” by selecting the antlers on a subject (01:04:40).

- Discussion of SAM 3 Agent Failure Cases: A failure case was presented where the agent struggled with a complex referential query, failing to correctly segment “a black object that protects you from the rain being held by a person in jeans” and incorrectly segmenting a person not wearing jeans. This failure may be attributed to issues with the segmentation model or the specific model chosen for the task, which was Quinn 2.5 VL72B (01:04:40).

- Claude Agent Integration and Segmentation Task: The speaker demonstrated using Claude, equipped with a skill to call SAM 3, to perform 10 varying tasks, including a hard task requiring the segmentation of a railing on a building, which was not the main part of the image (01:05:38). They noted that Claude became “quite frustrated” with the SAM 3 model during previous attempts at this task (01:06:50). The speaker initiated a demonstration of Claude attempting the task of segmenting the “architectural feature on the building that is designed to people prevent people from falling from the heights,” like a railing (01:05:38).

- Tool Calling and Agent Architecture Discussion: J. Langley raised questions about the difficulties encountered when trying to get Pedantic AI to perform tool calls with binary or image responses, often requiring complex base64 encoding or URLs for image passing. Josh Phillips responded by noting that platforms like Claude have an out-of-the-box runtime, a sandbox, and Bash, which differs from Pyantic, which requires specific tools and does not inherently have a loop feature to continue iterating until a task is complete (01:08:33).

- Potential Applications for SAM 3 and 3D Modeling: Lorin Bales asked about using SAM 3’s pixel positioning capabilities for inferring physical location via coordinate transformations and pairing the segmentation output with other systems for 3D modeling. Josh Phillips confirmed that the mask output could be fed into an “image to 3D mesh pipeline,” suggesting potential for generating items like SDL files for 3D printing (01:10:23). They also discussed the possibility of using the model in fields like protein folding, mentioning that AlphaFold 3 is a diffusion transformer-based model, and suggesting a possible future talk on the topic (01:11:27).

- Agent Memory and Adaptive Behavior Demonstration: Claude initially attempted to use the specific term “ballastrad” for the railing segmentation task but then displayed adaptive behavior by recalling past frustration with the term, which was noted as an interesting, unintentional callback to a memory review discussion (01:12:23). The agent leveraged its memory feature to pivot from the term “ballastrad” but, due to the difficulty of the task, ultimately settled for a result that was less optimal than could have been achieved without the memory influence, suggesting an interesting trade-off in agent behavior (01:14:14) (01:16:22).

- Using Agent Workflow for Training Data Generation: It was proposed that while the current agent looping workflow is not suitable for real-time or critical tasks, it can be valuable for generating training data by performing segmentations from a teacher model. This process is useful for concepts that are hard to mask out and can be utilized for purposes like Reinforcement Learning (RL) training (01:15:18).

- Advanced Agent Segmentation Examples: Other tasks were reviewed, showing the agent’s ability to segment non-primary image elements, such as “the piece of equipment designed for scoring points in the sport being played here” (the basketball goal) and “items in the trunk that would spoil if left in a hot car for several hours” (food) (01:17:37). The agent also demonstrated its ability to perform referential segmentation, such as segmenting “the child standing furthest to the right,” a task that the base SAM 3 model cannot do without an agent framework (01:18:41).

- Testing Segmentation of Abstract Concepts: Pretty Woods questioned the model’s performance on abstract, vague concepts such as segmenting “the most expensive object in the scene” (01:19:48). When tested, Claude, tied with the SAM 3 agent, identified the pickup truck as the most expensive object, explicitly defining the road and building elements as background infrastructure and thus not discrete objects (01:23:33).

- Fine-Tuning and Model Behavior Influences: Pretty Woods shared an experience where a large language model’s performance was negatively affected by referencing prior chats from 2023 where they attempted to guide it to better performance, causing the model to revert to older behaviors (01:26:22). Josh Phillips noted that the Claude model being used for the current demonstration explicitly designated the road and building as background elements, which guided its choice to segment the pickup truck as the most expensive discrete object (01:23:33).

- SAM Geo and Integration with Geographic Data: J. Langley noted that they are fine-tuning SAM 2.1 and may use SAM 3, particularly SAM GO3, a wrapper that integrates the SAM model with geographic data. This wrapper facilitates the projection of geodata into pixel space, tiles images for the SAM model, and re-transforms the pixel points back into coordinates, which is beneficial for applications like agriculture (01:29:44).

- SAM Audio for Non-Visual Data: Phil Bording asked if the SAM model could be used to segment patterns (wiggles) from sensor data, such as spectrograms representing sounds like motorcycles or people walking (01:33:03). Josh Phillips confirmed that the SAM 3 audio model is designed for this, as audio models typically convert sounds into MEL spectrograms (an image format) for processing and labeling, making that paper the relevant resource for such tasks (01:34:14). They concluded that the base SAM 3 model focuses on real-world scene segmentation but can be fine-tuned to understand specifics like different species of birds if it has a base understanding of the general category (01:35:12).